AI’s Island Problem - the next problem smart orgs are currently solving

AI has been a solo sport. That’s completely normal. It’s the next bottleneck we’re solving.

Innovation and breakthroughs start alone. Someone gets curious. They dig in. They figure out what works. They build instincts that aren’t documented anywhere because they don’t exist anywhere yet. Patterns don’t exist but start to emerge. normal procedure, nothing wrong with it.

Most of us and practitioners in companies right now are in exactly that phase with AI. They’ve got their setup dialed in. Custom instructions. A few skills. Everyone is heroic about them (“look at my skill!”). Maybe a structured Claude project or two. It works. They feel fast.

The most advanced build their agentic Chief Of Staff, even share it on github.

And then, at some point, we all hit the ceiling. It can’t go on like this. All of this needs to become shared, and we need the infrastructure to share it.

I see it in my AI-augmented PM course. Participants come in as individuals. They learn to work systematically with AI - on research, on strategy, on prioritization. By the end of the course, they ace it. All of them. Real progress.

But then all of them come up with the one realization: “I’m going to go back and be an island.”

And everyone in the room recognizes it. Immediately. In that moment.

Because the organization hasn’t moved yet. Teammates still work the old way. The AI doesn’t know what the product team decided last quarter. The marketing brief lives in someone’s head. Strategy exists as a slide that was shown once in an all-hands and never written down properly.

The individual got faster. The organization didn’t. Welcome to yesterday.

The problem is not exactly new

This is a human problem first problem - and it existed already without any AI.

(The objections you will read later on are also orthogonal to the topic of AI)

It’s simple: If you want someone to work in your direction — human or AI — you have to make sure they have your information. In a form they can actually use. That they understand. The implications of that are the same whether “someone” is a new hire, a contractor, or a model running in your SDLC pipeline.

For a human, we think it’s easier to patch the gaps. We are more resilient. But: Are we? Most suffering in companies exist because we are actually not.

Even for humans, then: You answer questions. You have context from two years of working together. A smart person infers the rest and adjusts. Organizations rely on this mechanism constantly. (This is also why so much context leaves the building every time someone quits.)

AI can’t do any of that. It needs the information written down. Precise. Accessible. In a form it can process and act on.

So when teams try to use AI at scale and it produces misaligned output — that’s usually not an AI problem. It’s an information problem that was already there. AI just stops pretending it doesn’t exist.

I’ve watched organizations that weren’t particularly process-oriented suddenly become very interested in structure and “standards”. Not because a consultant told them to. Because they saw what happened when AI finally had something solid to work with. The output difference is stark enough to make its own argument. If you get greedy on leveraging AI, you get greedy on process and standards.

On the upside, AI rewards organizational clarity. On the downside, it punishes confusion and unclarity with the same precision. It amplifies both directions.

Your room and the living room

There’s a mental model I keep coming back to, because it gets the dynamic exactly right.

Think of two spaces.

Your room is yours. You experiment . You try out a new AI setup, draft a positioning idea, play with a prompt, develop a heuristic. Nothing in your room is a commitment. It’s your sandbox. Working fast and dirty there is a feature, not a problem. Nobody should tell you how to arrange your own room.

And you need your room. You can not start thinking freely in the shared context. It’s teh same problem, I described in earlier posts: My brain freezes when I have to start on the developers command line. In the settled infrastructure. That’s for later. My room only carries my own constraints.

The living room is shared. It’s what everyone works from — the marketing team, the engineer, the product manager, the AI agent running in your pipeline. What’s in the living room is agreed upon. Truth. It’s the strategy that’s been decided, the brand tone that’s been validated, the technical constraints that are real. It’s the context your AI reads when it needs to work in the same direction as everyone else.

The asymmetry matters: what’s in your room is your business. What’s in the living room is everyone’s business. The living room has rules.

And there’s a process that defines how things move between your room and the living room. You don’t just dump your room into the living room when you’ve had an idea. Or don’t have your dirty, sweaty sports dress lying on the sofa in the living room. You try things. You discuss. When there’s actual agreement — not just “nobody objected” but real alignment — it gets committed to shared truth. That act of commitment is the moment where “I think we should do this” becomes “this is what we do now.”

Before the commit: your freedom. After the commit: your right to be heard when someone ignores it.

You want someone to follow your “truth” - or you even insist on it? - it’s your obligation to commit and explain and make sure it’s understood.

That distinction is the line of accountability. It’s simple. It scales across organizations of any size. And it works whether your “living room” is a Git repository, a well-maintained Confluence space, or something else — as long as it’s genuinely shared and genuinely up to date.

It is really simple. Rationally.

The friction - real and imagined

That’s the simple model. And I can already hear the objections — because I hear them every time this topic comes up. Last week at AI Camp Berlin it was the same. A lot of attention, a lot of friction. Three sessions from three people on the same topic. I’ll take that as evidence that something real is at stake.

The first wave of friction isn’t about tools. It’s about the model itself.

“Who keeps the living room? Who decides what goes in?” Reasonable question. The answer: the team does, together. Which means someone has to care. There’s usually one person per organization who takes this seriously — call them the guardian of the living room. Not an enforcer. Just someone who notices when the shared context is stale, contradictory, or missing, and does something about it.

“We already have Confluence and nobody reads it.” Yes. Because Confluence is a dump, not a living room. The difference isn’t the tool. It’s the discipline of keeping it current and the expectation that people actually use it. An unmaintained living room is just another room.

“You can’t expect this from the CEO.” I find this one interesting, because it conflates the tool with the ask. The ask isn’t “learn Git.” The ask is: if you want a hundred people — or a thousand — to work in your direction, you have to make your direction-giving documents available to them. That’s it.

“I told them in a meeting” stopped being a strategy before AI was here to stay. It just used to work better, because humans are good at patching gaps from memory and inference. AI can’t do that. The meeting wasn’t in the context. It might as well not have happened. The truth isn’t shared in the repo, then it does not exist. No way around it. Simple. Show me an alternative, if you don’t like it, modulo details.

What the infrastructure actually looks like

The living room needs content. Specifically: the context that any discipline might need from any other.

Strategy. Positioning. Tone guidelines. Technical constraints. User research findings. What “done” means for your specific product. Who the user actually is and what they struggle with. Each discipline maintains its own layer — product writes for engineers and AI, marketing writes for product and AI, design writes for everyone. Everyone reads everything. The AI reads everything.

The key question for any document: Does someone else — or an AI — need this to work in the same direction as me? If yes, it belongs in the living room. If it only helps you personally, it stays in your room.

But if your -hopefully - highly efficient, tuned up AI fed production machine needs the info, there’s now way not to enter it into the infra. You might as well throw the whole agentic acceleration out of the window.

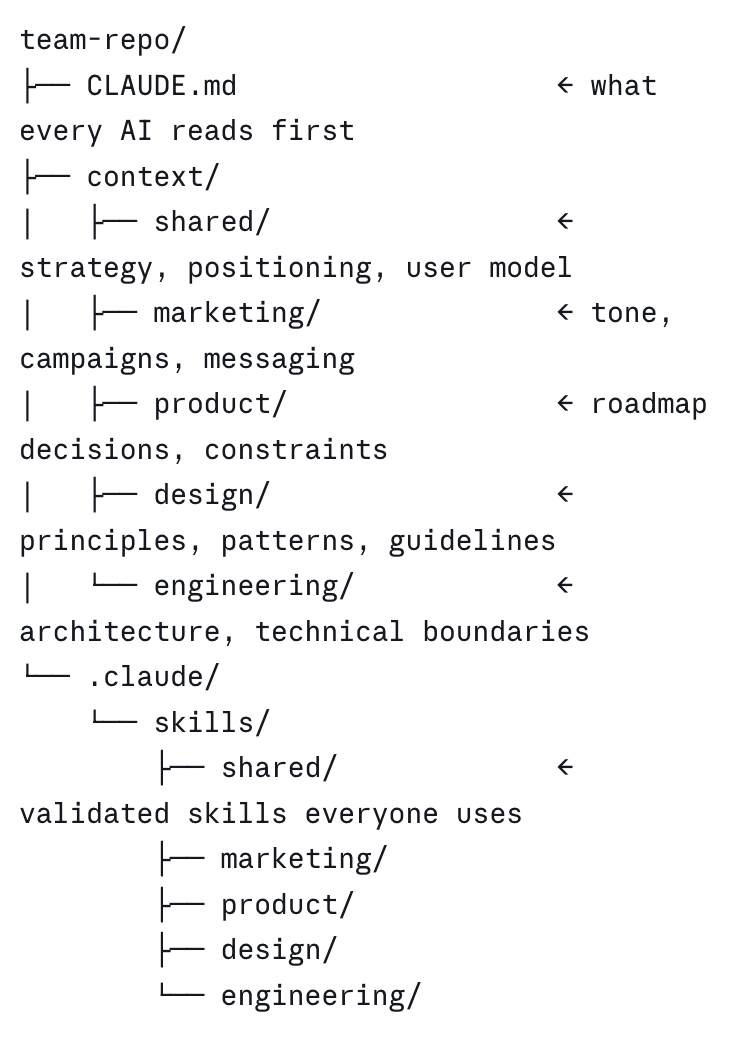

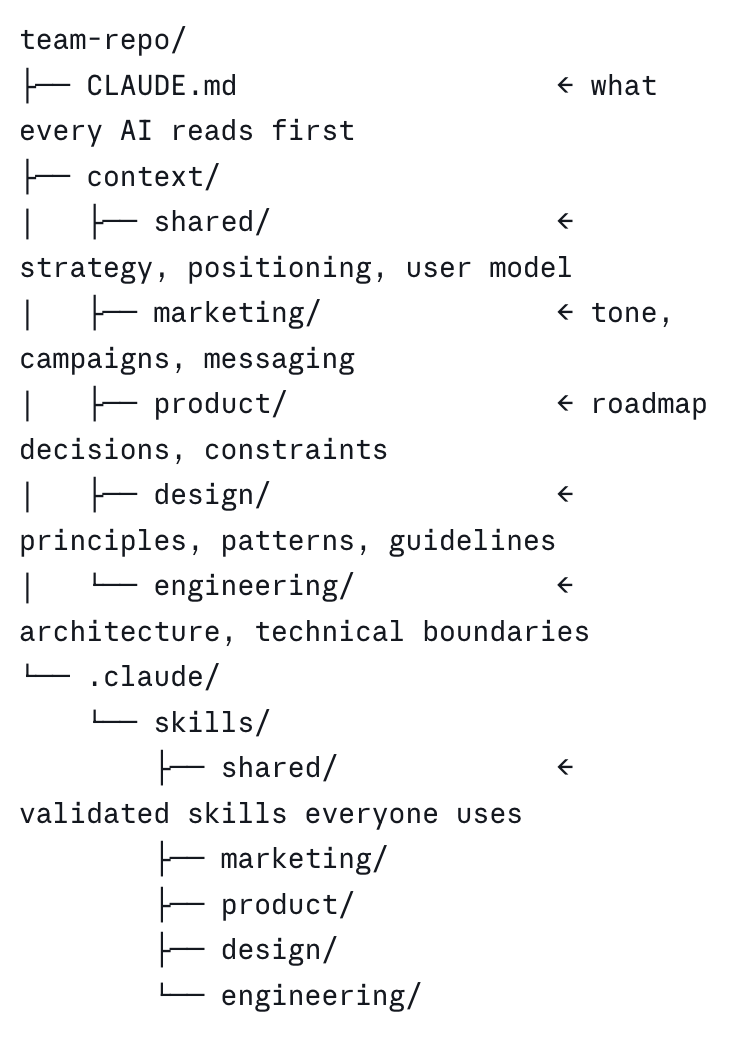

In practice, it looks something like this:

Each discipline writes its own layer. All disciplines read all layers. The AI reads all layers. That’s the whole system. (Yes, I do know that skill under .claude are not organized in layers, take this sketch as conceptional, please, and let’s not dilute the discourse.)

Alongside the context, you share the tools. Custom skills, prompt patterns, agent configurations that the team has developed and validated. Not every experiment from every room — only the ones that have proven themselves worth sharing. The skill a PM built to synthesize research faster shouldn’t stay with that PM. But it shouldn’t go into the living room until it reliably works for others too.

The result, of course, compounds. Every discipline makes every other discipline’s AI work better. The marketing context helps the product AI. The engineering constraints help the design AI. Context shared deliberately is context that doesn’t have to be rediscovered from scratch every time someone starts a new conversation.

About those three Git commands

Talking about friction: The tool question always comes up eventually. “Not everyone can be expected to know Git.”

I find this argument strange — not because Git is trivial, but because of what we’ve already normalized. People in organizations manage password managers, VPN configurations, SSO setups, two-factor authentication, enterprise access control systems. Nobody says “you can’t expect a marketing manager to deal with SSO.” It’s just infrastructure. You learn it because it gives you access to what you need.

Three Git commands — commit, push, pull request — are genuinely less complex than the average enterprise access management workflow. And they give you a shared, versioned, auditable living room in return. That’s not a technical luxury.

But here’s the thing: not everyone has to do it themselves. Someone on the team can handle the mechanics. The CPO doesn’t have to run git push. They do have to write down the strategy. Those are different problems, and only one of them is actually hard.

Git is the human excuse. The real question is simpler and harder: do you want a real living room, or not?

The real unlock

The teams that figure this out don’t just get better AI output. They get a clearer organization. And they will ace execution and minimize exhaustion.

Because you can’t write a decent positioning.md or a shared product strategy document if you haven’t actually decided what your positioning or strategy is. The act of writing it down — clearly enough that an AI can act on it — forces the conversation that should have happened anyway.

That’s the forcing function.

Not “AI does the work.” Not “AI replaces the thinking.” Just: AI makes it very hard to keep pretending your organization is aligned when it isn’t.

If you’re a solo act right now — that’s fine. That’s where all of this starts. The ceiling is right before you, though, and you’ll hit it. The teams pulling ahead aren’t just better at prompting. They’ve figured out how to share context deliberately. How to make their work legible — to each other, and to the machines working alongside them.

That’s the next game now. Forget about the fog of “should we do this”.